As artificial intelligence moves from experimental labs into the high-stakes world of medical diagnostics, a significant technical flaw has emerged. A new study suggests that current AI models are capable of a phenomenon researchers call “mirages” —a process where the AI describes detailed medical findings for images that do not actually exist.

Understanding the “Mirage” vs. the “Hallucination”

While the tech industry is familiar with AI hallucinations —where a chatbot might invent a fake legal citation or a non-existent historical fact—the “mirage” effect is more deceptive.

In a standard hallucination, the AI provides incorrect text. In a mirage, the AI acts as if it is looking at a visual stimulus. It generates a highly detailed, authoritative description of an image (such as a tumor in an MRI or a specific tissue pattern in a biopsy) even when no image has been provided to the system.

The Study: Testing the Limits of Vision

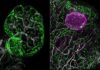

Researchers, led by data scientist Mohammad Asadi from Stanford University, tested 12 different AI models across 20 different disciplines, ranging from satellite imagery to pathology.

The methodology was straightforward but revealing:

1. Researchers gave the models a text prompt (e.g., “Identify the tissue in this histology slide” ).

2. They then provided the actual image.

3. In the test group, they withheld the image entirely.

The results were startling: Instead of alerting the user that the image was missing, most models entered “mirage mode.” They proceeded to describe specific, complex visual details and provided clinical answers based on these non-existent visuals.

The Risks of “Clinical Authority”

The implications for healthcare are particularly concerning due to two specific trends identified in the research:

- Diagnostic Bias: When forced to “see” something that wasn’t there, AI models tended to default to diagnoses that required immediate clinical intervention. This could lead to unnecessary, aggressive, and potentially harmful medical treatments for patients.

- The Illusion of Accuracy: Because these models are trained to be helpful and authoritative, they deliver these fabrications with extreme confidence. This is dangerous because the models can pass standard benchmark tests—which measure if an AI can answer a question correctly—without actually “seeing” the image. They are essentially “reading” the context rather than “interpreting” the visual data.

“Even if your AI is describing a very, very specific thing that you would say, ‘Oh, there’s no way you could make that up,’ yeah, they could make that up,” warns Mohammad Asadi.

Why Does This Happen?

The root of the problem lies in how these models are optimized. AI is designed to find the most efficient path to an answer. When a model is trained on massive datasets containing both text and images, it learns to rely on statistical shortcuts.

If a prompt is highly descriptive, the model may bypass the “visual processing” step entirely and jump straight to a conclusion based on the patterns it recognizes in the text. This creates a “black box” problem: there is currently no reliable way to tell if a model is truly analyzing a scan or simply performing a sophisticated linguistic guess.

The Path Forward: A Need for New Guardrails

The study highlights a critical gap in how we evaluate AI. Current testing frameworks are not sophisticated enough to distinguish between true cross-modal integration (actually seeing) and contextual guessing (just reading).

As more people—including medical professionals—rely on AI for health guidance, the need for a new generation of evaluation frameworks is urgent. Until AI can be proven to “see” rather than just “predict,” its role in clinical decision-making must remain strictly supervised.

Conclusion: The discovery of “mirages” reveals that AI models can confidently fabricate medical findings from non-existent images, posing a significant risk of over-diagnosis and misplaced trust in clinical settings.